Futurologists Predict Classrooms Will Be Replaced By 3D Worlds

Classrooms will be completely replaced by computer-simulated 3D worlds within 25 years – and pupils will be DNA tested to identify their academic strengths, according to futurologists.

The virtual environments will enable paleontology students to ‘walk’ among dinosaurs in the wild, marine studies scholars to observe ocean life ‘underwater’, and astronomy classes to be hosted on board a starship.

DNA testing will predict subjects that students are likely to excel in, enabling teachers to focus on their strengths from an early age.

Brain-computer technology will be used to continually monitor mental and emotional well-being, identifying imminent signs of student burnout or stress.

Classes are also likely to be more skill-based rather than age-related, increasing the rate of educational progress.

And AI technology will provide instant in-person translation, meaning students of many different nationalities can learn together.

The predictions were made in a white paper written by Tracey Follows, one of the world’s top 50 female futurists and Visiting Professor of Digital Futures and Identity.

Commissioned by EdTech provider GoStudent, the report – ‘The End Of School As You Know It: Education in 2050’ – highlights the integral role tech will play in the future of education.

Tracey Follows, who appeared in Forbes, in a list of the top 50 female futurists in the world, said: “The future of education is incredibly exciting and dynamic.

PHOTO BY SWNS

“With the rapid progressions in technology, we are on the brink of a technological explosion that will change how the entire world operates.

“Education will be at the epicenter of that change. The shift towards immersive learning, AI-driven personalization, and continuous monitoring is set to revolutionize how we learn and adapt.

“The educational journey will be tailored to each individual’s purpose and passion, with the classroom expanding beyond its physical constraints and revolutionizing education.

“By looking ahead to 2050, we get a glimpse of what is to come – and the results are fascinating.”

GoStudent, an online tutoring provider and education platform, is at the forefront of transforming education through tailored 1:1 learning, looking to leverage technological advancements to progress the education system and, ultimately, empower students worldwide.

Co-founder and CEO Felix Oswald said: “Education is at an inflection point. Historically, learning was exclusive, very personalized and highly inaccessible.

“We’re excited to see how education will evolve, and what this means for us as we continue our mission to reimagine education.”

Produced in association with SWNS Talker

Google’s Race For AI Dominance Against Microsoft Still On, Alphabet CEO Sundar Pichai Suggests

“I want people to know that we made them dance,” said Microsoft (NASDAQ:MSFT) CEO Satya Nadella in a recent interview, when discussing his company’s success in beating Google to the punch on artificial intelligence.

For Sundar Pichai, CEO of Alphabet (NASDAQ:GOOGL) (NASDAQ:GOOG) — Google’s parent company — it doesn’t matter who gets the early start, but rather who wins the race.

Earlier this year, Microsoft released a generative AI chatbot based on ChatGPT as a part of its Bing search engine. Google was forced to rush out an untested version of its own search engine chat companion, Bard.

“In cricket, there’s a saying that you let the bat do the talking,” said Pichai in an interview with Wired’s Steven Levy. Besides serving as a snappy reference to the national sport of both Indian-born executives, the saying explains Pichai’s vision for the role that AI could have in his company’s future.

“It really won’t matter in the next five to 10 years,” said Pichai, who sees a silver lining in competitors releasing earlier versions of generative AI to the public: he believes Google can do even more after people have seen and understood how AI technology works.

In April, Pichai ordered Google’s two separate artificial intelligence divisions to merge, creating one super-powerful partnership between two of the world’s most advanced AI labs, Deep Mind and Google Brain.

“They were focused on different problems, but there was a lot more collaboration than people knew,” he said. Some commentators, however, read the merger as a panic-fueled measure to speed up the development of AI against rising competition from Microsoft and other companies like Amazon (NASDAQ:AMZN), which has been pushing hard on AI in its cloud services since April.

Late last month, Google Cloud CEO Thomas Kurian announced that Google is also planning to expand its AI offering within cloud services. For this, the company’s collaboration with Nvidia (NASDAQ:NVDA) as the foremost producer of the high-profile chips needed for large language model training is key. Pichai said he’s optimistic about the long-lasting relationship between the two companies.

Amazon’s Alexa also stands as a competitor in Google’s race to extend its web dominance into the conversational AI field. Google recently “supercharged” Google Assistant (which is Android’s version of Apple’s Siri) with generative AI capabilities.

Pichai says that since becoming Google’s CEO in 2015, “it was clear that deep neural networks were going to profoundly change everything.”

That’s the reason Alphabet is now internally considered to be an AI-first company, with large-language models receiving the largest chunk of the R&D budget.

Pichai says he and his company are firm supporters of government regulation for AI. The dangers that rapid AI penetration poses for society are so large that the White House has been consistently pushing for regulation, with President Joe Biden recently saying that “we’ve got to move fast here.”

Pichai said “AI is one of the most profound technologies we will ever work on. There are short-term risks, midterm risks and long-term risks.”

In the short-term, so-called “hallucination” of large-language models, by which conversational AI will make up data to satisfy a user’s query, can be some of the most troublesome challenges.

“So right now, responsibility is about testing it for safety and ensuring it doesn’t harm privacy and introduce bias,” he said.

For the medium-term, the impact of AI in the workforce becomes a more urgent matter. The impact of AI in the labor market could have vast consequences on the global economy, and that’s another place where regulation can help to achieve a soft landing.

In the long-term, the worries for AI are whether the building machines that are smarter than us poses an existential threat to humanity and whether more advanced models will be aligned with human values.

© 2023 Zenger News.com. Zenger News does not provide investment advice. All rights reserved.

Produced in association with Benzinga

Edited by Arnab Nandy and Newsdesk Manager

‘Brainless’ Robot Masters Navigating Through Complex Mazes

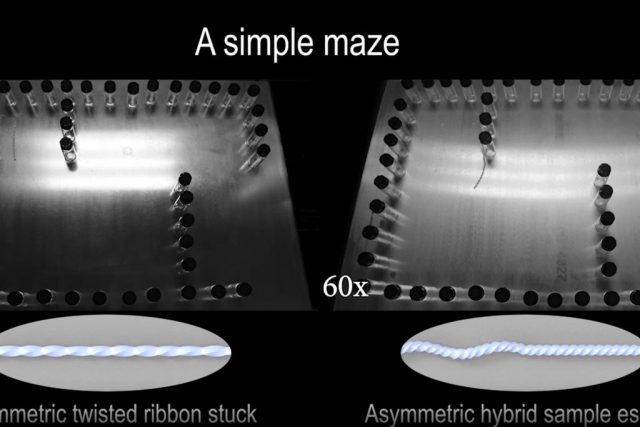

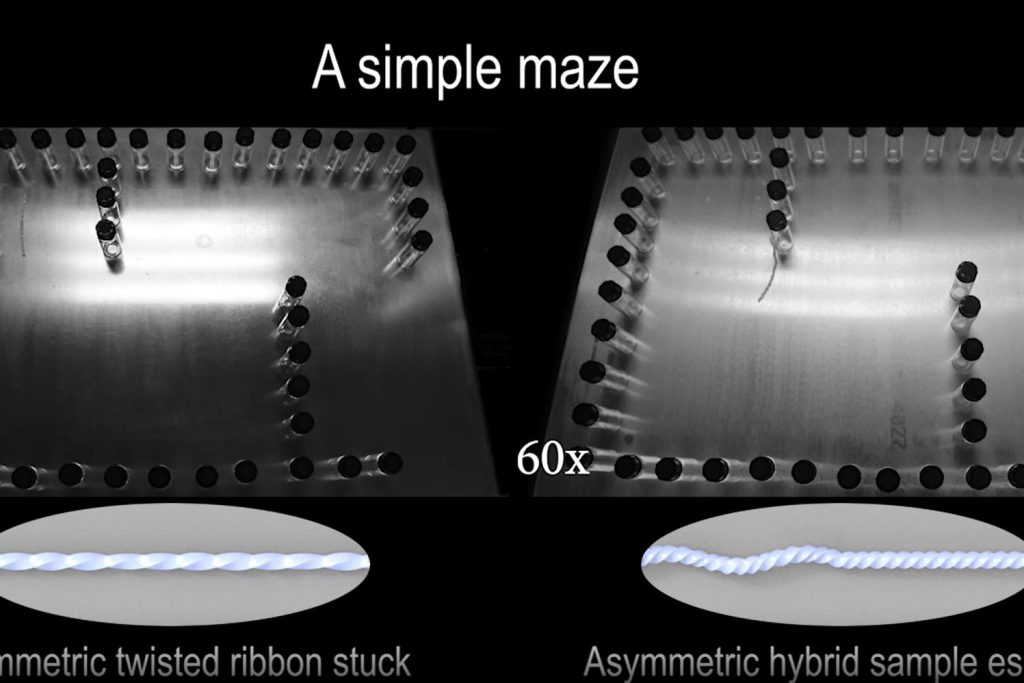

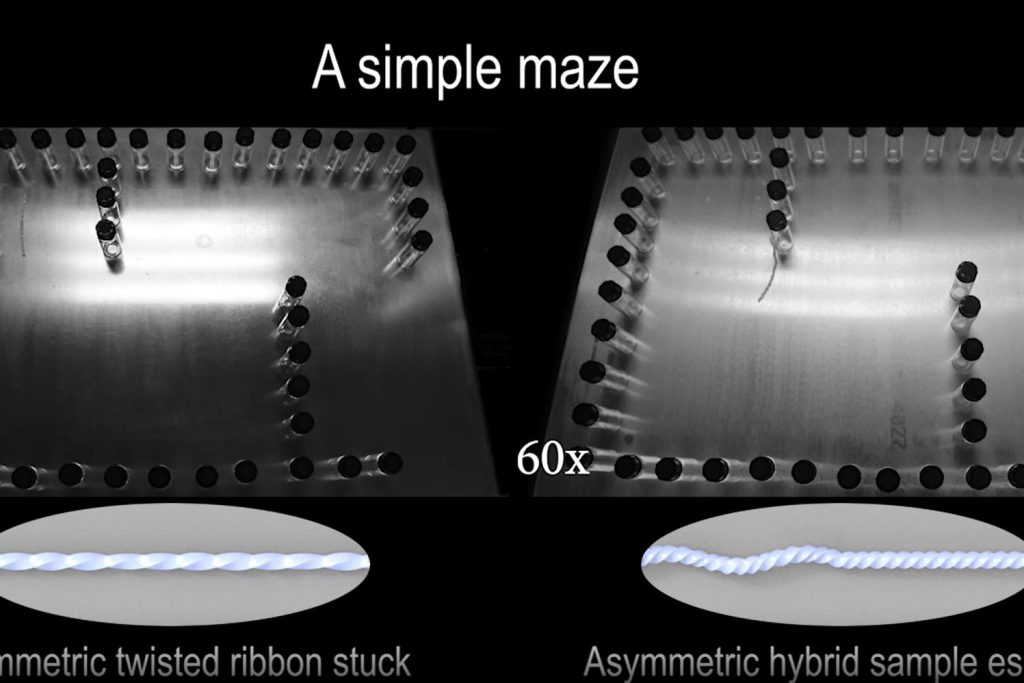

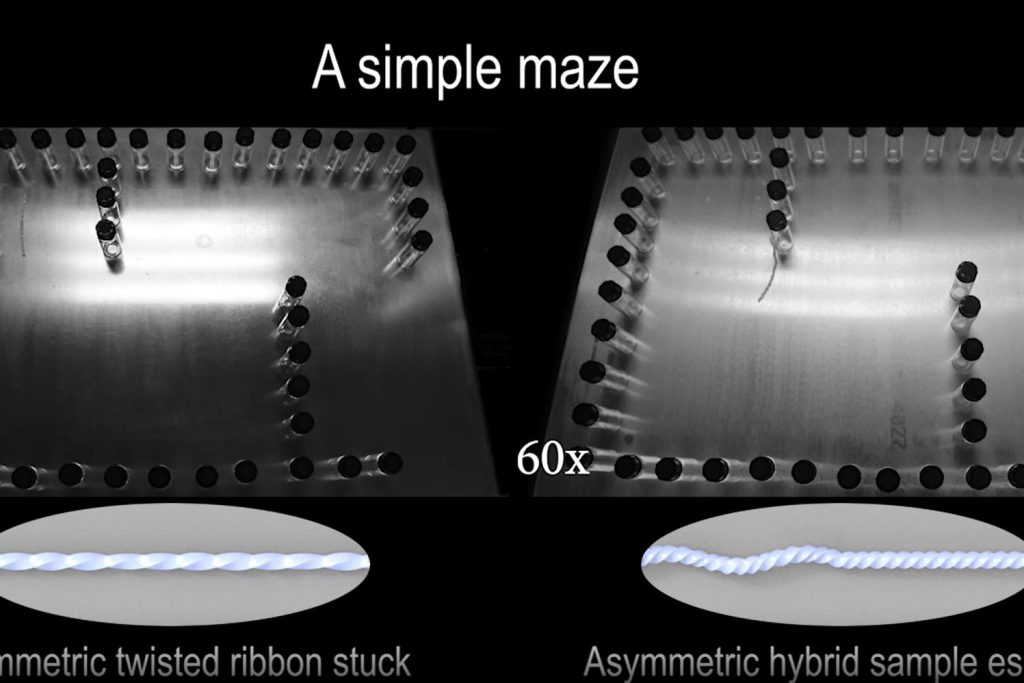

A “brainless” robot that can navigate its way around complex mazes has been developed.

Researchers have shown the ability of the soft robot to navigate mazes with moving walls – and fit through spaces narrower than its body size.

They have tested the new robot design on both a metal surface and in sand with similar success.

Scientists say it rolls like a plastic cup, which is wider at the top than at its base.

The team at North Carolina State University previously created a soft robot that could navigate simple mazes without human or computer direction.

Now they have taken their work a major step forward by creating a “brainless” soft robot that can navigate more complex and dynamic environments.

Dr. Jie Yin said: “In our earlier work, we demonstrated that our soft robot was able to twist and turn its way through a very simple obstacle course,” says.

“However, it was unable to turn unless it encountered an obstacle.

“In practical terms, this meant that the robot could sometimes get stuck, bouncing back and forth between parallel obstacles.

“We’ve developed a new soft robot that is capable of turning on its own, allowing it to make its way through twisty mazes, even negotiating its way around moving obstacles.

“And it’s all done using physical intelligence, rather than being guided by a computer.”

He explained that “physical intelligence” refers to dynamic objects – such as soft robots – whose behavior is governed by their structural design and the materials they are made of, rather than being directed by a computer or human intervention.

As with the earlier version, the new soft robots are made of ribbon-like liquid crystal elastomers.

When the robots are placed on a surface that is at least 55 degrees Celsius (131 Fahrenheit), which is hotter than the ambient air, the portion of the ribbon touching the surface contracts, while the portion of the ribbon exposed to the air does not.

That induces a rolling motion; the warmer the surface, the faster the robot rolls.

While the previous version of the soft robot had a symmetrical design, the new robot has two distinct halves.

One half is shaped like a twisted ribbon that extends in a straight line, while the other is shaped like a more tightly twisted ribbon that also twists around itself like a spiral staircase.

The research team says that the asymmetrical design means that one end of the robot exerts more force on the ground than the other end.

Dr. Yin said: “Think of a plastic cup that has a mouth wider than its base.

“If you roll it across the table, it doesn’t roll in a straight line – it makes an arc as it travels across the table. That’s due to its asymmetrical shape.”

First author Dr. Yao Zhao, a postdoctoral researcher at NC State, said: “The concept behind our new robot is fairly simple: because of its asymmetrical design, it turns without having to come into contact with an object.

“So, while it still changes directions when it does come into contact with an object – allowing it to navigate mazes – it cannot get stuck between parallel objects.

“Instead, its ability to move in arcs allows it to essentially wiggle its way free.”

Dr. Yin added: “This work is another step forward in helping us develop innovative approaches to soft robot design – particularly for applications where soft robots would be able to harvest heat energy from their environment.”

The findings were published in the journal Science Advances.

Produced in association with SWNS Talker

Google Cracks Down On AI-Altered Election Ads

In a bid to enhance transparency and accountability in political advertising, Alphabet Inc’s Google is rolling out a new policy that will require election advertisers to disclose the use of artificial intelligence tools in crafting their messages.

Starting mid-November, this policy will have significant implications for election campaigns across Google’s platforms, Bloomberg reported Wednesday.

Under this policy update, election advertisers utilizing generative AI, software capable of creating or modifying content based on a simple prompt, must prominently display disclosure messages in their ads.

These messages must explicitly state when content has been altered by AI, such as “This audio was computer generated” or “This image does not depict real events.” The policy excludes minor adjustments like image resizing or brightness adjustments.

This move reflects Google’s commitment to transparency in political advertising, especially in an era where AI tools, including its own, are increasingly used to create synthetic content.

Michael Aciman, a Google spokesperson, notes that this policy aims to support responsible political advertising and provide voters with the information they need to make informed choices.

It’s important to note that Google’s new policy does not extend to videos uploaded on YouTube, even if they are related to political campaigns.

The same is also true for Meta Platforms Inc. (NASDAQ:META)’s Facebook and Instagram, as well as for Elon Musk’s X, formerly known as Twitter.

Google’s decision to enact this policy comes in response to growing pressure to combat misinformation on its platforms, particularly false claims related to elections and voting.

In the past, Google has taken steps such as identity verification for election advertisers and targeting restrictions for election ads. The company has also provided a transparency center for the public to track election ad purchases, spending, and impressions on its platforms.

Shares of Alphabet Inc. closed 1% lower to $135.37 on Wednesday.

Produced in association with Benzinga

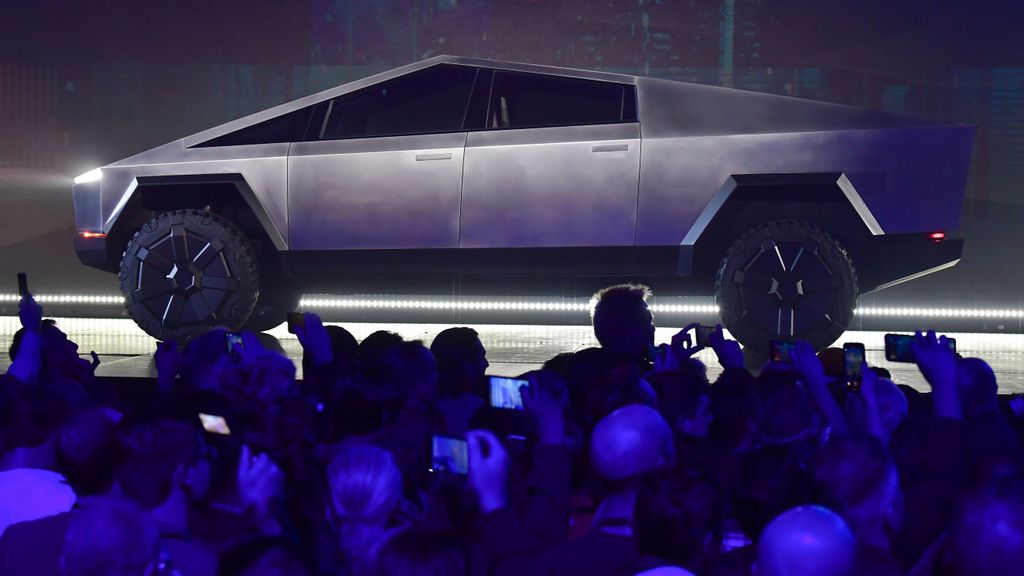

Tesla Analyst Predicts Cybertruck’s Price Range, And It Seems To Be Cheaper Than Ford F-150 Lightning:

Tesla, Inc. (NASDAQ:TSLA) is nearing the commercial launch of its much-awaited Cybertruck and rumors abound regarding the specifications and the pricing.

Tesla investor and Future Fund Managing Partner Gary Black shared his expectations concerning the electric pickup truck.

What Happened: Black said he expects three variants of the Cybertruck to roll out when Tesla launches it later this year.

Range

0-60 miles/hour acceleration

Pricing

Single-motor variant

250 miles

6.5 seconds

$49,900

Dual-motor variant

300 miles

4.5 seconds

$59,900

Quad-motor variant

400 miles

2.9 seconds

$79,900

Gary Black’s predictions for the Cybertruck

The estimated pricing will keep the vehicle under the IRA cap for pickups, the fund manager said.

“My guess is they start with quad motor delivs [sic] first since initial start-up costs will be high, then go to dual motor, then single motor,” Black said.

Why It’s Important: Ford Motor Co‘s (NYSE:F) F-150 Lightning lineup begins with the base trim, known as the F-150 Lightning Pro. It boasts dual motors and starts at $49,995, offering an EPA range of 240 miles. It was initially priced at $59,974 but shortly after Tesla confirmed the production of its first Cybertruck, Ford reduced it by roughly $10,000.

On the other end of the spectrum, the top-tier F-150 Lightning Platinum kicks off at $91,995.

Black is among the analysts who are uber-bullish on the Cybertruck. He sees Tesla’s 2024 volume estimates to move sharply higher, thanks to the Cybertruck launch along with the Model 3 refresh and full self-driving V12 L4 coming to fruition. Volume growth will begin to accelerate in the fourth quarter, the fund manager said.

“Today analysts are forecasting FY’24 volume growth of just +27% (after +40% growth in FY’23) which seems absurdly low with three major volume catalysts on the horizon,” he added.

Black sees a similar scenario as what happened when the Model Y was launched in 2020 and lifted Tesla’s volume growth from 36% in 2020 to 87% in 2021.

“There will be a huge halo effect that ignites the entire $TSLA franchise by interest generated from Cytruck and M-3 Refresh,” he said.

The fund manager said he has high conviction based on what happened with the Model Y in 2021. “How can the first $TSLA pickup with 2M pre-orders be a flop?? CT will be a huge hit,” he said.

Tesla shares closed Wednesday’s session down 1.78% at $251.92, according to Zenger News Pro data.

Produced in association with Benzinga